SAFE AI

Standards and Assurance Framework for Ethical AI

SAFE AI gives humanitarian organisations the governance infrastructure to deploy AI with confidence – managing risk, distributing accountability, and keeping communities in the loop. Free to use. Built for every humanitarian, not just AI engineers.

PAGE CONTENTS

Why now | How SAFE AI works | Resources | Videos: our journey to SAFE AI | What we advocate for | SAFE AI contributors | Stay connected

WHY NOW

Built for organisations that want to act – not stall

AI is already reshaping humanitarian operations: needs assessments, cash transfer targeting, anticipatory action systems that trigger financing before a crisis hits. For organisations navigating this fast-moving landscape, the question is no longer whether to engage with AI – it’s how to do so responsibly, without carrying the full weight of that risk alone.

Right now, there is no shared infrastructure to help you do that. Global frameworks like the EU AI Act were not designed for fragile and conflict-affected environments. Individual agency policies can only go so far. And as funding pressure mounts, the cost of getting AI wrong – operationally, reputationally, politically – is rising.

SAFE AI changes that calculus.

-

No agency should carry sole accountability for high-stakes AI deployment. SAFE AI distributes that accountability across a shared architecture. When something goes wrong, the question shifts from “Why did you do this?” to “Did you follow the standard?”

-

Governance infrastructure gives organisations permission to move forward rather than reasons to stall, and positions them to integrate the next wave of AI capabilities within a framework that already exists.

-

Aid spending faces sustained legislative and public scrutiny. A sector that cannot answer questions about its own AI governance is exposed on two fronts at once: the use of AI, and the absence of oversight. SAFE AI closes both gaps simultaneously.

THE FRAMEWORK

How SAFE AI works

SAFE AI takes organisations through the four stages of implementing AI in a humanitarian context: problem definition and concept; design; development; and deployment and monitoring. At each stage, formal Decision Gates test whether the work should proceed, against humanitarian principles, protection requirements, and the conditions for responsible refusal.

The SAFE AI Transparency Card

The Transparency Card is the central governance record. It accumulates across the lifecycle of an AI deployment, holding the outputs of every stage, every assessment, and every decision in a single auditable document. It is the record against which the system is held to account.

Community in the Loop

Community in the Loop is a governance requirement embedded at every stage of the SAFE AI Journey. Affected communities hold knowledge about how AI systems behave in their context that internal testing does not generate. SAFE AI treats community participation as a source of governance evidence, with documented influence over the system's design. For higher-risk use cases, community engagement is a condition of deployment.

Grounded in humanitarian principles

SAFE AI is built from humanitarian principles. Humanity, impartiality, neutrality, and independence shape every decision the Framework asks organisations to make. They are tested at each Decision Gate. Where AI use cannot be reconciled with them, responsible refusal applies. A documented decision that AI is not the right tool is itself a SAFE AI outcome.

Proportionate to risk

SAFE AI applies three risk tiers. The depth of governance scales to the stakes of the use case, regardless of the size of the organisation deploying it.

Tier 1: Baseline. Internal, advisory AI use. Light-touch governance.

Tier 2: Enhanced. AI shapes operational decisions, with human oversight. Full assessment and assurance required.

Tier 3: High risk. AI directly affects people's access to assistance, protection, or information. Community co-design and ongoing monitoring are mandatory.

RESOURCES

SAFE AI in practice

The SAFE AI Framework

The full governance framework for ethical AI in humanitarian action. Free to use. Scales to fit any organisation. Available now.

-

New to humanitarian AI? Start here

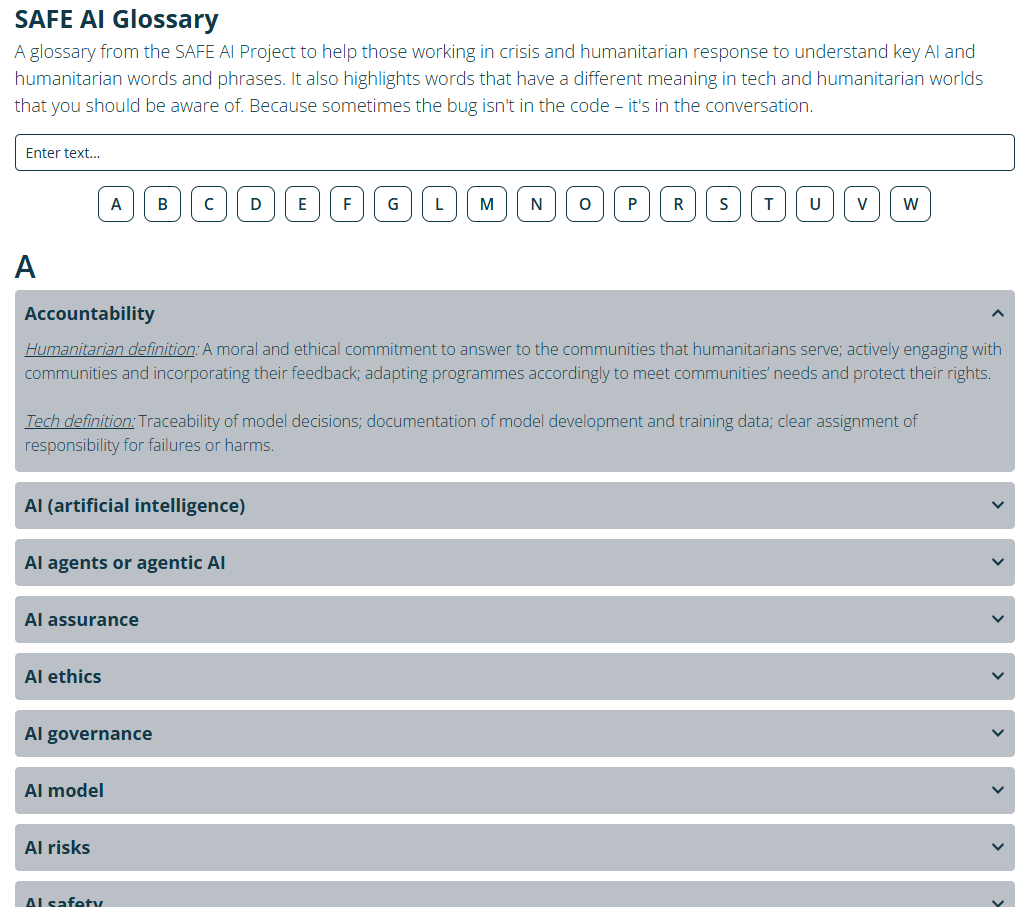

GLOSSARY

Plain-language definitions to help organisations build better, safer and more effective AI partnerships. No technical background needed.

-

Step by step: how SAFE AI contributes to trustworthy AI

BRIEFING NOTE

How each step of the SAFE AI Framework supports NIST’s seven characteristics of trustworthy AI.

-

Risks in humanitarian AI

BRIEFING NOTE

A quick briefing on the risks associated with humanitarian AI, and how the SAFE AI Framework and tools respond to each.

-

The governance gap in humanitarian AI: addressing the structural gap between global frameworks and operational reality

BRIEFING PAPER

The analytical foundation for SAFE AI – establishing the nature and scale of the structural governance gap, and why individual agencies cannot fill it alone.

-

From experimentation to engagement

ACADEMIC PAPER

Evidence on the paradox of participatory AI and power in contexts of forced displacement and humanitarian crises.

-

Co-designing AI solutions with crisis-affected communities

HOW-TO NOTE

Practical guidance for meaningful community co-design of AI in humanitarian contexts. -

Co-design vs. user-centred design for AI solutions

FACTSHEET

A clear breakdown of the difference – and why it matters for responsible humanitarian AI.

-

Addressing power dynamics in participatory AI for crisis-affected communities

POLICY BRIEF

Research on the structural challenges of participation and power when AI meets forced displacement.

THE CDAC STORY

Our journey to SAFE AI

CDAC’s commitment to community voice in humanitarian response predates AI: it is the foundation of everything we do.

As AI began reshaping humanitarian operations, we asked: what happens to participation and accountability when decisions are made by systems communities cannot see or contest? These videos trace that arc.

Perspectives on AI from Kakuma refugee camp

The film that started it all: we consulted crisis-affected communities in Kenya directly about AI governance, asking whether this moment can disrupt power imbalances rather than entrench them.

Dr Abeba Birhane: AI For Good?

A critical challenge to the sector at a watershed moment: AI for genuine social good must be built, controlled and owned by the communities it serves.

Nyalleng Moorosi: Data, power and participation

The full stakes laid out: AI promises participation at scale, but without ethical governance it risks deepening the inequalities and harms humanitarian action seeks to address.

OUR POSITION

What we advocate for

SAFE AI is a framework, but frameworks need backing. These are our three key asks of donors, governments and the sector to make humanitarian AI safe, accountable and ethical.

SAFE AI contributors

-

Helen McElhinney

Founding architect, SAFE AI

& Executive Director, CDAC Network -

Anjali Mazumder

Research Director, AI, Accountability, Inclusion & Rights, The Alan Turing Institute

-

Michael Tjalve

Founder, Humanitarian AI Advisory

-

Sarah Spencer

Founding architect, SAFE AI

-

Suzy Madigan

CDAC Network expert consultant

-

Shruti Viswanathan

CDAC Network expert consultant

STAY CONNECTED

Join the SAFE AI community

The Framework is now live. Register to stay connected with updates, guidance, sector developments and Phase 2 news.

This project has been funded by UK International Development from the UK government.